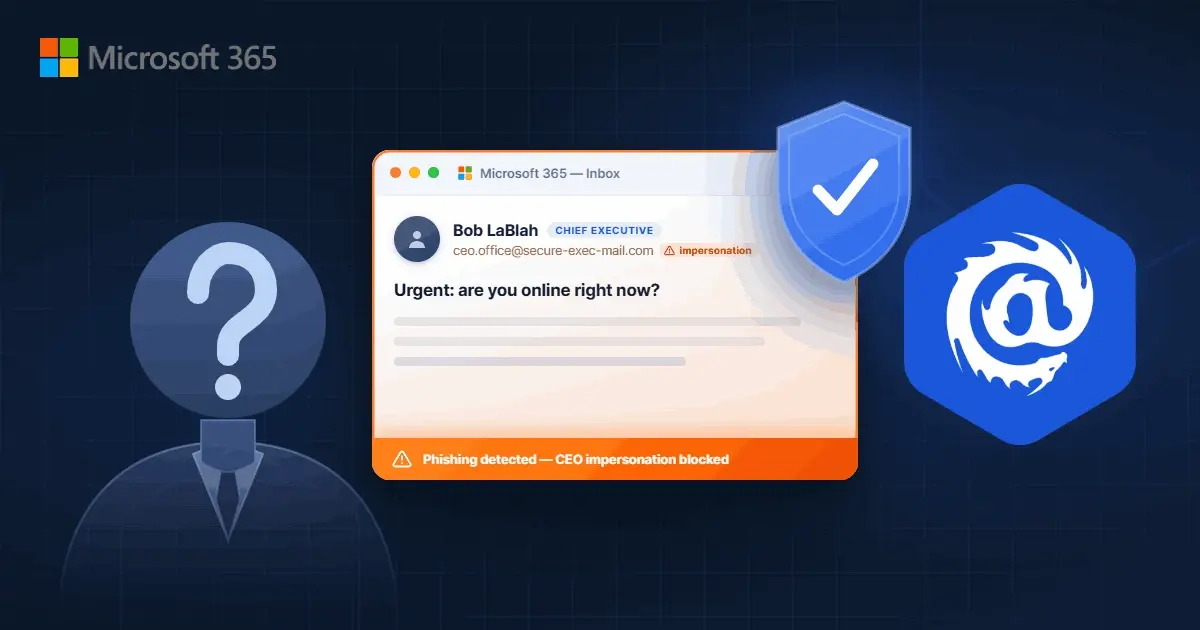

How to Stop CEO Impersonation in Microsoft 365 in 7 Steps (2026)

/Leadership%20Headshots/Audian%20Paxson%20Headshot%201000x1000%20032026.webp?width=100&height=100&name=Audian%20Paxson%20Headshot%201000x1000%20032026.webp)

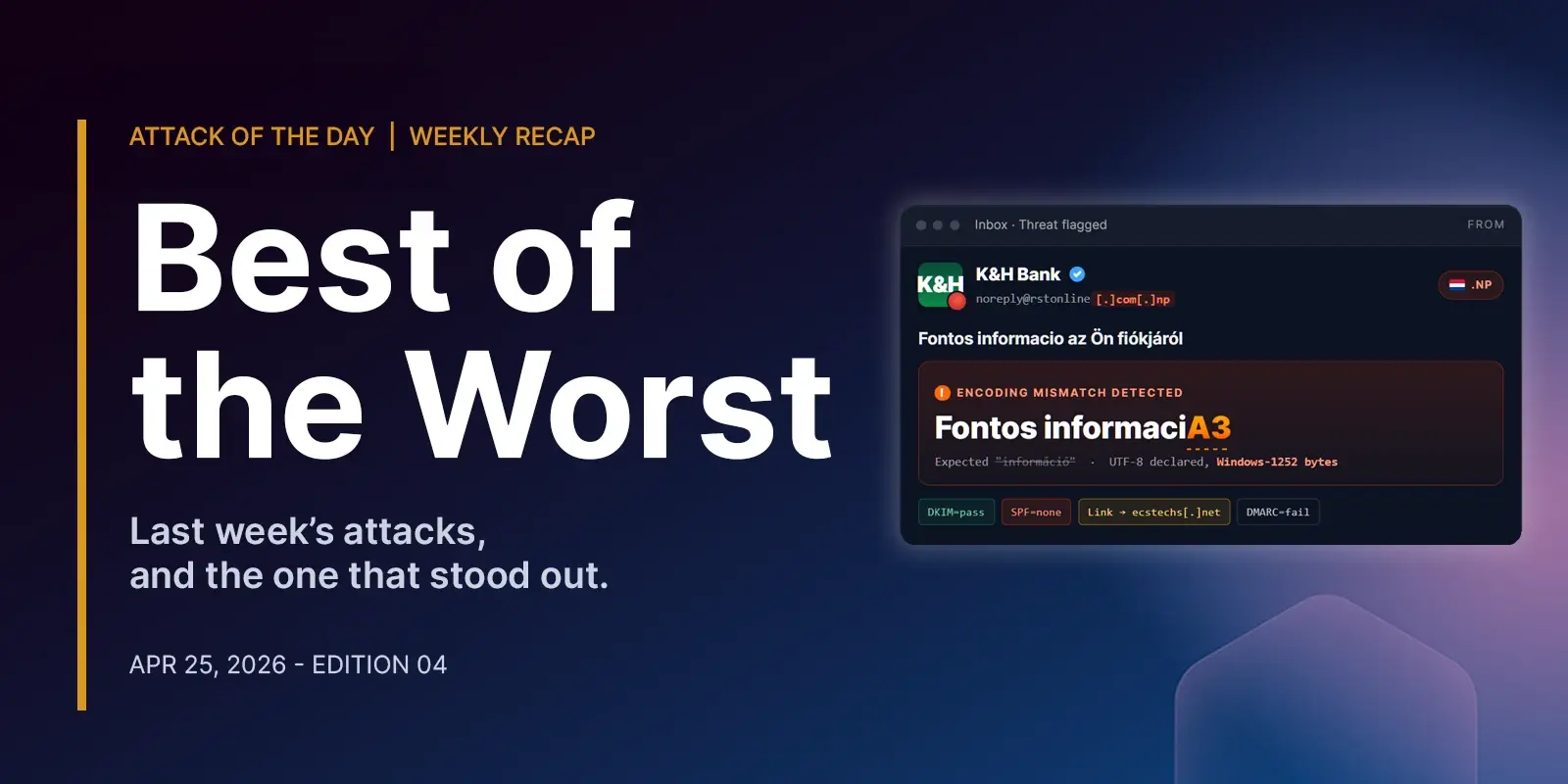

CEO impersonation attacks slip through native Microsoft 365 filters more often than most IT admins expect. Attackers spoof display names, register lookalike domains, and mimic communication patterns to...

Read more

/case_study_thumb_telit.webp?width=1136&height=639&name=case_study_thumb_telit.webp)

.png)